Service growth: from zero to global availability

What does it take to build a service from scratch and scale it up to 100s of millions of users? How hard is it to be a one-person company and have your service be built such that it can handle 10s of thousands of QPS with a low latency and be robust to failures?

I hypothesize that it is, in fact, not that hard, as long as you are taking care of 1) building a solid foundation for your service, 2) decouple the logical parts that your service are composed of and 3) use third-party software when you are unable to handle the non-business related aspects of your service.

To prove my hypothesis, I’m going to build, over a series of posts, an URL shortener service. Its scope is small enough that a single person can build the core functions from scratch and then evolve it to be globally available, resilient and dependable service.

Why (and what is) an URL Shortener?

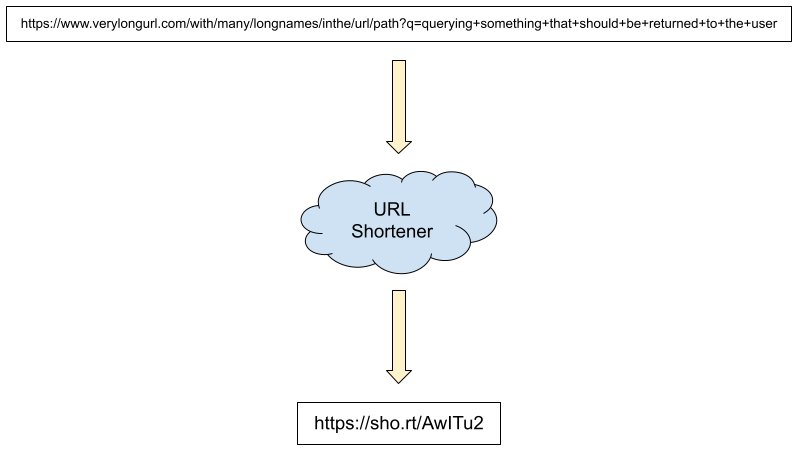

An URL Shortener is a redirection service: you give it a URL and it returns a different, shorter URL that when the user loads it, they get redirected to the original URL.

In the example image, the very long url is stored in the URL Shortener service and it returns a short URL, https://sho.rt/AwITu2, which you can now share with your friends instead of the long version. When they load the URL, the service will look up the name, find the mapping and return an HTTP redirect status code. The browser then reads the original long URL from the HTTP redirect response and loads that.

This is a very simple service and it is very easy to explain. It is also useful. Twitter, for example, has its own service, https://t.co/ , so that users adding URLs to their tweets that have it consume so many characters from their limit. It also allows them to gather user click data for every URL posted since they will have been redirected through their shortening service. And they can provide protection so that if a user posts a malicious URL, twitter can scan it and disallow the posting if it detects it as malicious.

What do we need to build?

From the description I gave of the service, we can clearly see what we need in order to create our own version of this service:

- A storage service that can keep the mappings between the short names and the long urls.

- An HTTP server that can accept “shortening” requests and can redirect to the original urls, given a shortened url.

- A protection service that can scan a URL and decide if it is safe to shorten.

Storage and HTTP server were pretty obvious, since this is a web service and we need to keep state. The protection, on the other hand, is not as obvious, even though it should be. We don’t want to become a source of malware, malicious programs or even be a concealer of illegal images. Allowing those would, at best, make our service be ignored / blocked by other services and at worst could get us in serious legal trouble.

So, the criteria to be able to launch this service is that it should have a minimum level of abuse protection, where minimum will be defined in future posts. For now, let’s concentrate on how we will build the service. You know, the fun parts.

Keeping it lite

For the storage service, I’m going to use SQLite. I’m sure many of you have heard or used it before and are probably saying “but it is not a production database!”. And if you are thinking that, I don’t blame you. This was the consensus for many years, especially with the existence of PostgreSQL and MySQL/MariaDB. The main issues with SQLite were 1) that it only supported a single reader/writer at a time and 2) having backups of your database were a manual task.

Both of these issues have been resolved.

Since version 3.7.0 (from 2010!) SQLite supports a WAL-mode, which allows multiple readers to access the database concurrently and not be blocked by a writer. You still only are allowed to have a single writer, but particularly for our case, we expect reads to be significantly more common than writes. And that is actually true for most user web applications.

And since early 2021, a service called Litestream has been available that can continuously backup your SQLite database to S3 or a multitude of different locations. It provides the disaster recovery tools you need to recover your service in case your database gets wiped out or corrupted. The author, Ben Johnson, was not too long ago hired by fly.io to continue working on SQLite and Litestream.

Go and Echo

For the language, I chose Go. It is a language that I’m very familiar with and it has great tooling for building web applications. There are a few frameworks available for it and I chose Echo, mostly because it has a large enough community and a lot of middlewares for many functionalities that I don’t want to have to build (session management, authentication, etc).

Compared to Python, Go is a very boring language. It is statically typed, though type inference makes it feel like a dynamically typed language to an extent; there is no method or operator overloading; it only recently got Generics and even then it is fairly limited, it is not object oriented in the way most OO-languages are and error handling is painful for some.

But, these are qualities to me because I can look at a block of code and, without having to read multiple different files or even read through the source code of my web framework of choice on a daily basis (looking at you, DRF) and understand exactly what it is doing. As a one-person business, I don’t want to have to learn too many things in order to be productive.

SQL vs ORM

One thing I didn’t mention was how I would interact with the database. SQLite uses SQL and creating SQL queries is something I know how to do. But, in many projects I’ve worked on, we’ve adopted an ORM (Object-Relational Manger), which allows you to write code in your language of choice instead of writing SQL.

ORMs have their advantages and in my experience they tend to be better than writing and maintaining raw SQL mappings in the long term. But they have their own weakness, chief of all being that you are many times just writing SQL using your language of choice.

My biggest problem with using SQL is not writing the queries themselves, but keeping the mapping between the code and the queries, particularly when you have to update the schema and queries. Most ORMs have good support for that.

But then I found sqlc.dev. It’s a library that parses your schema, your queries and generates the Go code that keeps that mapping for you. In other words, I can write SQL queries, sqlc will parse them and actually give me parse errors in case my query is faulty and generate the Go mapping code. sqlc.dev is the right amount of indirection for me because the source of truth for database access is still the query: SQL is still used for what it is best.

Hosting

In order for the service to be available we need to host it somewhere with a public IP. For that I chose vpsdime. It has a Linux option with 4 vCPUs, 6GiB of ram, 30GiB of disk and 2TiB of traffic, all for US$7,00/month. This is more than enough for the service that hands a couple hundred qps and is very cheap.

Some would consider going with AWS/Azure/GCP and take advantage of their free tiers. This would make it easier to buy into their ecosystems as you grow, whereas all I’m getting with vpsdime is a machine. For now, the simplicity of a single machine is enough for the project and I’m getting quite a lot of resources for the money I’m paying. Later on, if it grows, we can decide if it is better to migrate to AWS or alike.

But more importantly, because this is just a machine, the service can be built without any assumption of the cloud provider, which means moving to them later is much easier.

Time to start

This was quite a lot of setup, but I hope to have made each of my choices clear: I’m optimizing for the one (maybe 2 or 3 in the future) people company where the amount of moving parts and the amount of new knowledge needs to be kept to a minimum.

In the next post we will dive into writing code for the service.